Quantum error correction protects information by periodically checking for errors and fixing them. Each check costs time and introduces its own noise; skipping checks lets errors accumulate unchecked. How often should you check?

More concretely: a surface code quantum memory works by repeatedly measuring stabilizers — a process called syndrome extraction (SE). Each round takes physical time (say $\tau = 1\,\mu\text{s}$), and between rounds the qubits sit idle, accumulating errors. If you want to keep a logical qubit alive for a fixed duration $T$, you have a choice: how long to wait between SE rounds?

Frequent SE rounds catch errors early but cost more rounds of noisy gates over the lifetime of the memory. Infrequent SE rounds mean fewer total rounds, but each round faces a larger backlog of idling errors. Somewhere in between is an optimum. To find it, we first need the error channel for an idling qubit.

The Idling Channel

Model the noise on an idling qubit as three independent Poisson processes: $X$, $Y$, and $Z$ errors, each arriving at rate $p/3$ per unit time. After time $\Delta t$, the count of each error type is Poisson-distributed with mean $p\Delta t / 3$. The total error rate is $p$ per unit time, split equally among the three types.

Since $X^2 = Y^2 = Z^2 = I$, only the parity of each count matters — a qubit hit by $X$ five times is in the same state as one hit once. So the effective channel depends only on whether each of the three Poisson counts is even or odd.

For a Poisson random variable $N$ with mean $\mu$:

so $P(N \text{ odd}) = (1 - e^{-2\mu})/2$. With $\mu = p\Delta t/3$ and writing $\alpha = e^{-2p\Delta t/3}$, define

$$ q \equiv P(\text{odd}) = \frac{1 - \alpha}{2}, \qquad r \equiv P(\text{even}) = \frac{1 + \alpha}{2} $$The net Pauli applied to the qubit is determined by which types fired an odd number of times. Since Pauli operators compose as $XY \sim Z$, $XZ \sim Y$, $YZ \sim X$, and $XYZ \sim I$ (where $\sim$ means equal up to global phase, which is undetectable):

| $X$ parity | $Y$ parity | $Z$ parity | Net Pauli |

|---|---|---|---|

| even | even | even | $I$ |

| odd | even | even | $X$ |

| even | odd | even | $Y$ |

| even | even | odd | $Z$ |

| odd | odd | even | $Z$ |

| odd | even | odd | $Y$ |

| even | odd | odd | $X$ |

| odd | odd | odd | $I$ |

Each error Pauli appears in exactly two rows: once as a single error (one type odd, two even) and once as the complementary double error (two types odd, one even). By independence of the three Poisson parities:

$$ P(X) = qr^2 + q^2r = qr(r + q) = qr $$and identically for $Y$ and $Z$. The identity also appears in two rows: $P(I) = r^3 + q^3$. Normalization is automatic: $r^3 + q^3 + 3qr = (r + q)^3 = 1$.

Substituting:

This is a depolarizing channel (as it had to be, by symmetry between $X$, $Y$, $Z$). The total depolarizing probability is

For small $\Delta t$, this gives $p_{\text{dep}} \approx p\Delta t$ — errors grow linearly, as expected. For large $\Delta t$, $p_{\text{dep}} \to 3/4$, the maximum value (the maximally mixed state). The saturation is physical: once the qubit is fully randomized, further noise can't make things worse.

The Tradeoff

With a wait time $\Delta t$ between SE rounds, the total number of rounds over a lifetime $T$ is $N = T/(\tau + \Delta t)$. Each round independently flips the logical observable with some probability $r(\Delta t)$ that depends on the code distance, the circuit noise $p_{\text{SE}}$, and the accumulated idle noise $p_{\text{dep}}(\Delta t)$. The probability of a logical failure — an odd number of flips over all $N$ rounds — is

which saturates at $1/2$ for large $N$ or large $r$ (a fully randomized logical qubit has a 50-50 chance of being wrong). Two forces compete as $\Delta t$ increases:

- $N$ decreases — fewer rounds, fewer chances for a logical flip.

- $r(\Delta t)$ increases — each round sees more physical errors, making a logical flip more likely.

At small $\Delta t$, the idle noise is negligible compared to the SE circuit noise, so $r$ is nearly constant. Increasing $\Delta t$ barely hurts the per-round error but meaningfully reduces $N$, so the total failure probability drops. At large $\Delta t$, the idle noise dominates and $r$ grows rapidly — for a distance-$d$ code below threshold, $r$ scales roughly as the effective physical error rate to the power $(d+1)/2$, so even modest increases in noise compound badly. The minimum of $P_{\text{fail}}$ lies where these two effects balance.

Where's the Optimum?

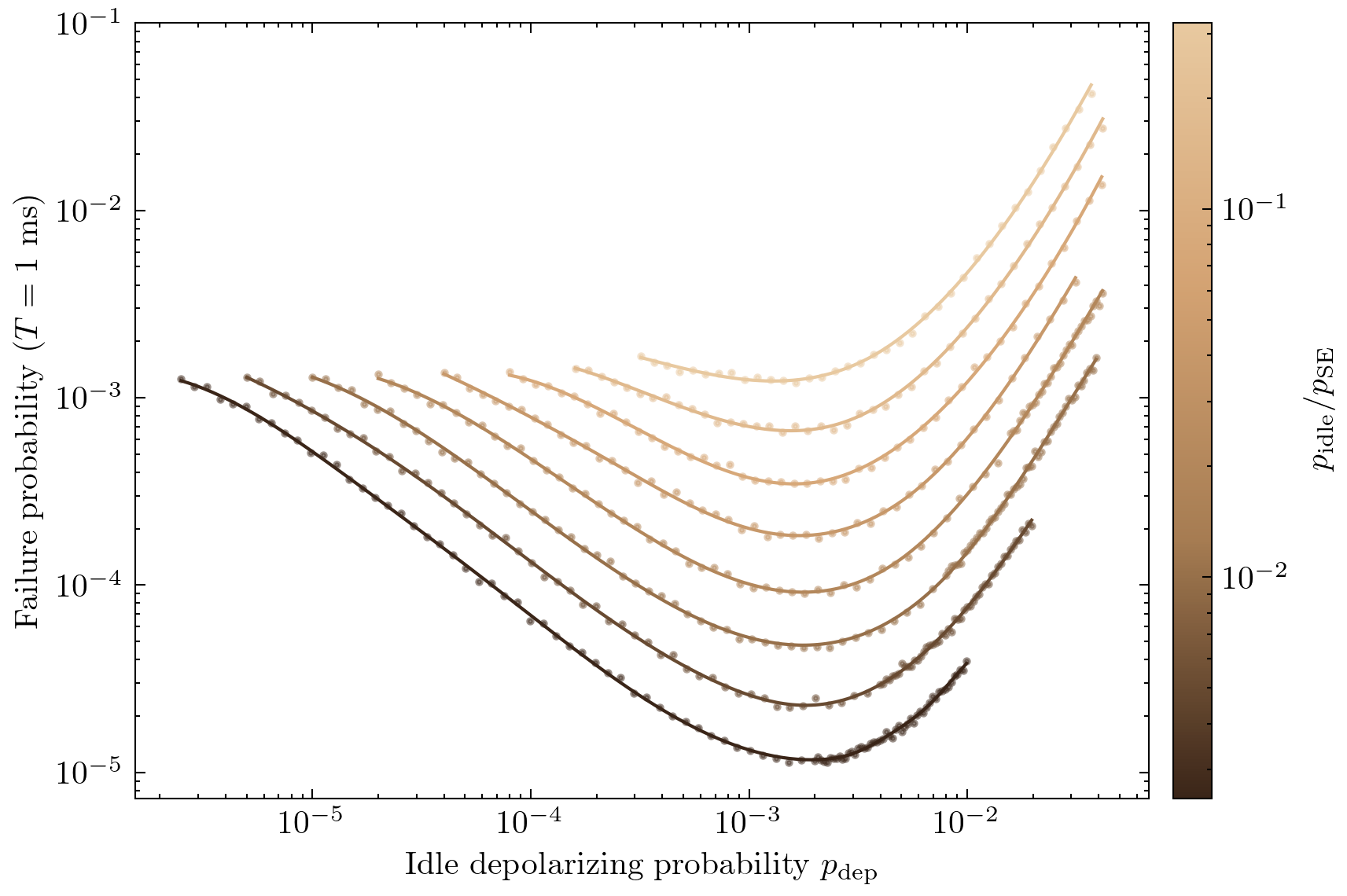

We simulate a distance-9 rotated surface code with SE circuit noise $p_{\text{SE}} = 10^{-3}$. For each of eight values of $p_{\text{idle}}$ — the idle depolarizing probability accumulated per SE round duration $\tau$ — spanning two decades, we sweep the idle time $\Delta t$, decode with pymatching, and extrapolate the total failure probability to a target lifetime of $T = 1\,\text{ms}$. Plotting against the accumulated idle depolarizing probability $p_{\text{dep}}(\Delta t)$ rather than $\Delta t$ itself makes the result obvious:

Every curve has a U-shape. On the left (small $p_{\text{dep}}$, frequent SE), the failure probability is high because the code pays for many rounds of circuit noise with little benefit — the idle errors were negligible anyway. On the right (large $p_{\text{dep}}$, infrequent SE), the accumulated idle errors overwhelm the decoder. The minima all line up around $p_{\text{dep}}(\Delta t^*) \approx 2\, p_{\text{SE}}$, regardless of $p_{\text{idle}}$.

Since $p_{\text{dep}} \approx p_{\text{idle}} \cdot (\Delta t / \tau)$ in the linear regime, the optimal number of SE-round durations to wait scales as

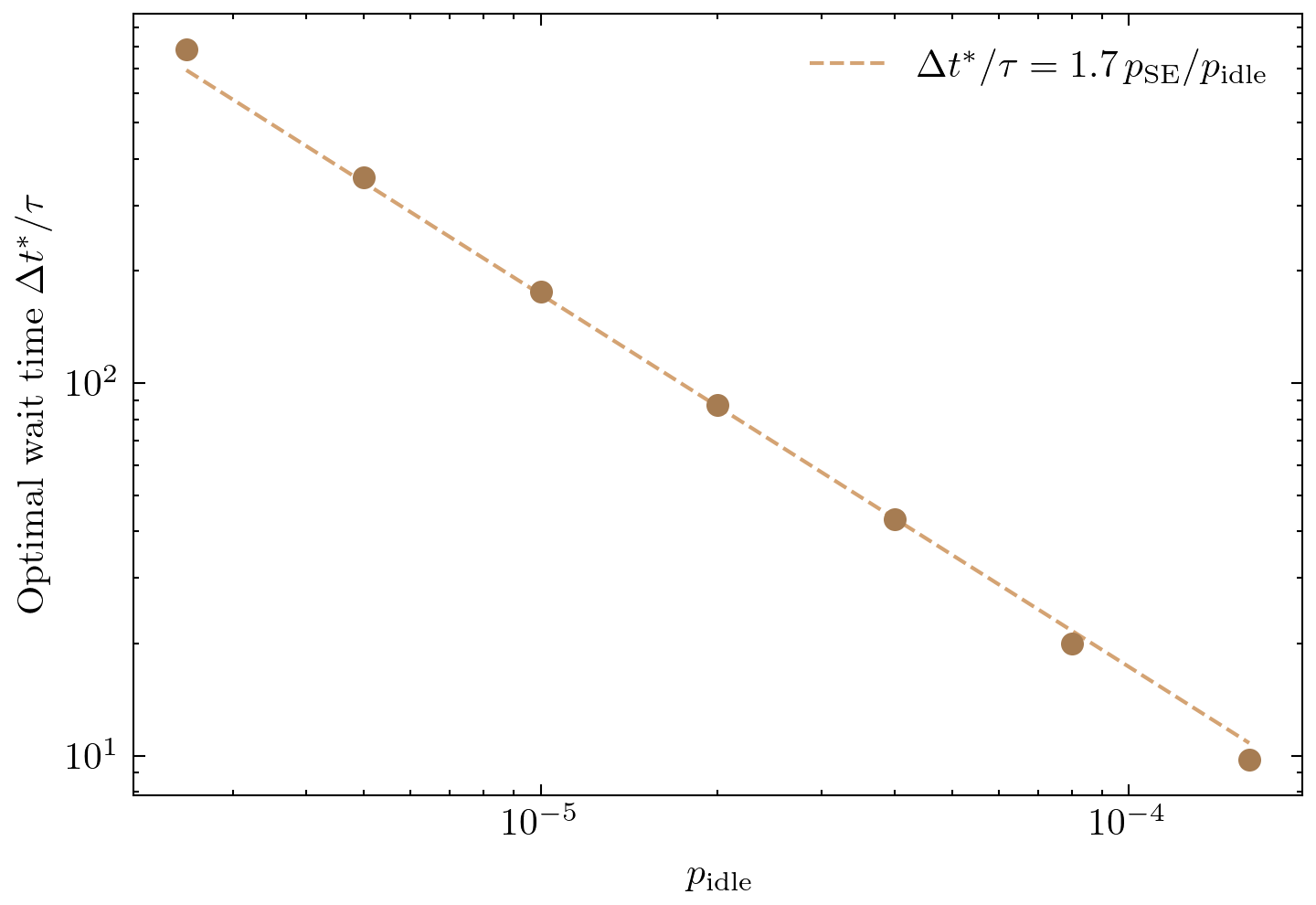

$$ \frac{\Delta t^*}{\tau} \approx c\,\frac{p_{\text{SE}}}{p_{\text{idle}}} $$for some constant $c$ of order 1. Fitting $c$ from the data with the exponent fixed at $-1$ gives $c \approx 1.7$:

Quieter qubits can idle proportionally longer between correction rounds — a factor of 10 reduction in $p_{\text{idle}}$ buys a factor of 10 more idle time. The constant $c \approx 1.7$ rather than exactly 1 reflects the fact that an SE round's total error budget includes gate depolarization, measurement flips, and reset flips, so the effective noise per round is somewhat larger than $p_{\text{SE}}$ alone.

The Rule of Thumb

The result — do SE when the accumulated error since the last round is comparable to the error introduced by the round itself — is folklore in quantum error correction. The logic is general: any source of error that accumulates between SE rounds (idling, crosstalk, leakage) should be kept roughly in balance with the error budget of the SE circuit. Doing SE more often than this buys diminishing returns; doing it less often wastes the code's correction capacity on errors that could have been caught earlier.

The same principle applies beyond memory. In a logical circuit built from transversal gates (Hadamard, CNOT, etc.), the question becomes: how many gates can I execute between SE rounds? Each gate introduces some error, and that error accumulates until the next round of syndrome extraction corrects it. The answer is the same — do SE once the accumulated gate errors are comparable to the SE round's own error budget. Pack in as many cheap gates as the code can tolerate before paying the cost of a correction round.

Citing this post

@misc{gu2026setiming,

author = {Andi Gu},

title = {Optimal Syndrome Extraction Timing},

year = {2026},

url = {https://andigu.github.io/blog/se-round-timing/}

}